Show Navigation

|

Hide Navigation

Interpreting GWR results |

|

|

Release 9.3

Last modified January 28, 2009 |

|

Output generated from the GWR tool includes:

- Output feature class.

- Optional coefficient raster surfaces.

- Message window report of overall model results.

- Supplementary table showing model variables and diagnostic results.

- Prediction output feature class.

Each of the above outputs is shown and described below as a series of steps for running GWR and interpretting GWR results. You will typically begin your regression analysis with Ordinary Least Squares (OLS). See Regression Analysis Basics and Interpreting OLS Regression Results for more information. A common approach to regression analysis is to identify the very best OLS model possible, before moving to GWR regression. This approach provides the context for the steps below.

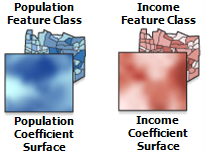

(A) After you have identified one or more candidate regression models using the OLS regression tool, run the model using GWR. Exclude from your GWR model any regional binary (dummy) variables as these will create problems with local collinearity and are not needed with GWR regression. You will need to provide an input feature class with the dependent variable you want to model/explain and all of the model explanatory variables. You will also need to provide a pathname for the output feature class, a kernel type (either Fixed or Adaptive), a bandwidth method (AIC, CV or user provided value). If, for Bandwidth Method, you select Bandwidth Parameter, you will need to provided a specific distance (for FIXED Kernel Type) or a specific number of neighbors (for ADAPTIVE Kernel Type). You can also provide values for the many optional parameters described in the GWR tool documentation. One especially interesting optional parameter is labeled "Coefficient Raster Workspace". When you provide a folder pathname for this parameter, the GWR tool will create coefficient raster surfaces (described below) for the model intercept and each explanatory variable.

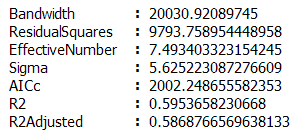

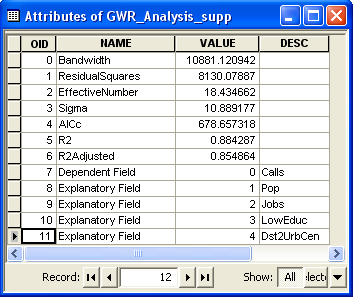

(B) Examine the statistical report printed to the message window. Each of the diagnostics reported is described below.

- Bandwidth or Neighbours: this is the bandwidth or number of neighbors used for each local estimation, and is perhaps the most important parameter for Geographically Weighted Regression. It controls the degree of smoothing in the model. Typically, you will let the program choose a bandwidth or neighbor value for you by selecting either "AICc" (the corrected Akaike Information Criterion) or "CV" (Cross Validation) for the Bandwidth Method parameter. Both of these options try to identify an optimal fixed distance or optimal adaptive number of neighbors. Since the criteria for "optimal" is different for AICc than it is for CV, it is common to get a different optimal value. You may also provide an exact fixed distance or a particular number of neighbors by selecting BANDWIDTH PARAMETER for the Bandwidth Method.

- ResidualSquares: this is the sume of the squared residuals in the model (the residual being the difference between an observed y value and its estimated value returned by the GWR model). The smaller this measure, the closer the fit of the GWR model to the observed data. This value is used in a number of other diagnostic measures.

- EffectiveNumber: this value reflects a tradoff between the variance of the fitted values and the bias in the coefficient estimates, and is related to the choice of bandwidth. As the bandwidth approaches infinity, the geographical weights for every observation approach 1, and the coefficient estimates will be very close to those for a global OLS model. For very large bandwidths, the effective number of coefficients approaches the actual number; local coefficient estimates will have a small variance but will be quite biased. Conversely, as the bandwidth approaches zero, the geographical weights for every observation approach zero with the exception of the regression point itself. For extremely small bandwidths, the effective number of coefficients is the number observations, and the local coefficient estimates will have a large variance but low bias. The effective number is used to compute a number of diagnostic measures.

- Sigma: this value is the square root of the normalized residual sum of squares where the residual sum of squares is divided by the effective degrees of freedom of the residual. This is the estimated standard deviation for the residuals. Smaller values of this statistic are preferable. Sigma is used for AICc computations.

- AICc: this is a measure of model performance and is helpful for comparing different regression models. Taking into account model complexity, the model with the lower AICc value provides a better fit to the observed data. AICc is not an absolute measure of goodness of fit, but is useful for comparing models with different explanatory variables as long as they apply to the same dependent variable. If the AICc values for two models differ by more than 3, the model with the lower AICc is held to be better. Comparing the GWR AICc value to the OLS AICc value is one way to assess the benefits of moving from a global model (OLS) to a local regression model (GWR).

- R2: R-Squared is a measure of goodness of fit. Its value varies from 0.0 to 1.0, with higher values being preferable. It may be interpreted as the proportion of dependent variable variance accounted for by the regression model. The denominator for the R2 computation is the sum of squared dependent variable values. Adding an extra explanatory variable to the model does not alter the denominator, but does alter the numerator; this gives the impression of improvement in model fit that may not be real. See Adjusted R2 below.

- R2Adjusted: because of the problem described above for the R2 value, calculations for the adjusted R-squared value normalize the numerator and denominator by their degrees of freedom. This has the effect of compensating for the number of variables in a model, and consequently the Adjusted R2 value is almost always smaller than the R2 value. However, in making this adjustment, you lose the interpretation of the value as a proportion of the variance explained. In GWR, the effective number of degrees of freedom is a function of the bandwidth so the adjustment may be quite marked in comparison to a global model like OLS. For this reason, the AICc is preferred as a means of comparing models.

The bandwidth units depend on the specified Kernel Type. If you select FIXED, the bandwidth value will reflect a distance in the same units as the input feature class (e.g., if the input feature class is projected using UTM coordinates, the distance reported will be in meters). If you select ADAPTIVE, the bandwidth distance will change according to the spatial density of features in the input feature class. The bandwidth becomes a function of the number of nearest neighbors such that each local estimation is based on the same number of features. Instead of a specific distance, the number of neighbors used for the analysis is reported.

Message window diagnostics are written to a supplementary table (_supp) along with summary information about model variables and parameters.

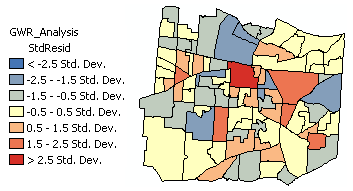

(C) Examine the output feature class residuals.Over and under predictions for a well specified regression model will be randomly distributed. Clustering of over and/or under predictions is evidence that you are missing at least one key explanatory variable. Examine the patterns in your OLS and GWR model residuals to see if they provide clues about what those missing variables might be. Run the Spatial Autocorrelation (Moran's I) tool on the regression residuals to ensure they are spatially random. Statistically significant clustering of high and/or low residuals (model under and over predictions) indicates the GWR model is misspecified.

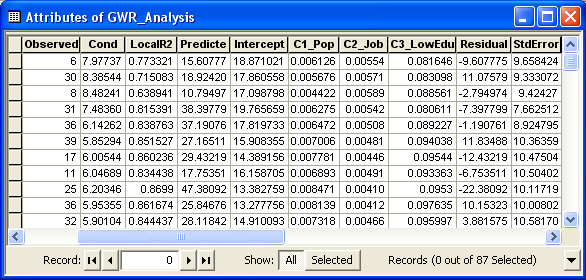

In addition to regression residuals, the output feature class table includes fields for observed and predicted y values, condition number (cond), Local R2, residuals, and explanatory variable coefficients and standard errors:

- Condition Number: this diagnostic evaluates local collinearity. In the presence of strong local collinearity, results become unstable. Results associated with condition numbers larger than 30, may be unreliable.

- Local R2: these values range between 0.0 and 1.0 and indicate how well the local regression model fits observed y values. Very low values indicate the local model is performing poorly. Mapping the Local R2 values to see where GWR predicts well and where it predicts poorly may provide clues about important variables that may be missing from the regression model.

- Predicted: these are the estimated (or fitted) y values computed by GWR.

- Residuals: to obtain the residual values, the fitted y values are subtracted from the observed y values. Standardized residuals have a mean of zero and a standard deviation of 1. A cold-to-hot rendered map of standardized residuals is automatically added to the TOC when GWR is executed in ArcMap.

- Coefficient Standard Error: these values measure the reliability of each coefficient estimate. Confidence in those estimates are higher when standard errors are small in relation to the actual coefficient values. Large standard errors may indicate problems with local collinearity.

(D) Examine the coefficient raster surfaces created by GWR (and/or with polygon data, a graduated color rendering of the feature level coefficients), to better understand regional variation in the model explanatory variables. When you use GWR to model some variable (the dependent variable) you are generally interested in predicting values or in understanding the factors that contribute to dependent variable outcomes. You are also interested, however, in examining how spatially consistent (stationary) relationships between the dependent variable and each explanatory variable are across the study area. Examining the coefficient distribution as a surface shows where and how much variation is present. You can use your understanding of this variation to inform policy:

- Statistically significant global variables that exhibit little regional variation inform region wide policy.

- Statistically significant global variables that exhibit strong regional variation inform local policy.

- Some variables may not be globally significant, because in some regions they are positively related and others they are negatively related.

(E) Map GWR predictions. GWR can be used for prediction when it is applied to sampled data. Specify a feature class containing all of the explanatory variables for locations where the dependent variable is unknown. GWR calibrates the regression equation using known dependent variable values from the input feature class, and then creates a new output feature class with dependent variable estimates.